Your AI Isn't Untrustworthy — It's Unverifiable

Posted on by Johanna EvansWe’ve spent the last two years trying to make AI sound more trustworthy.

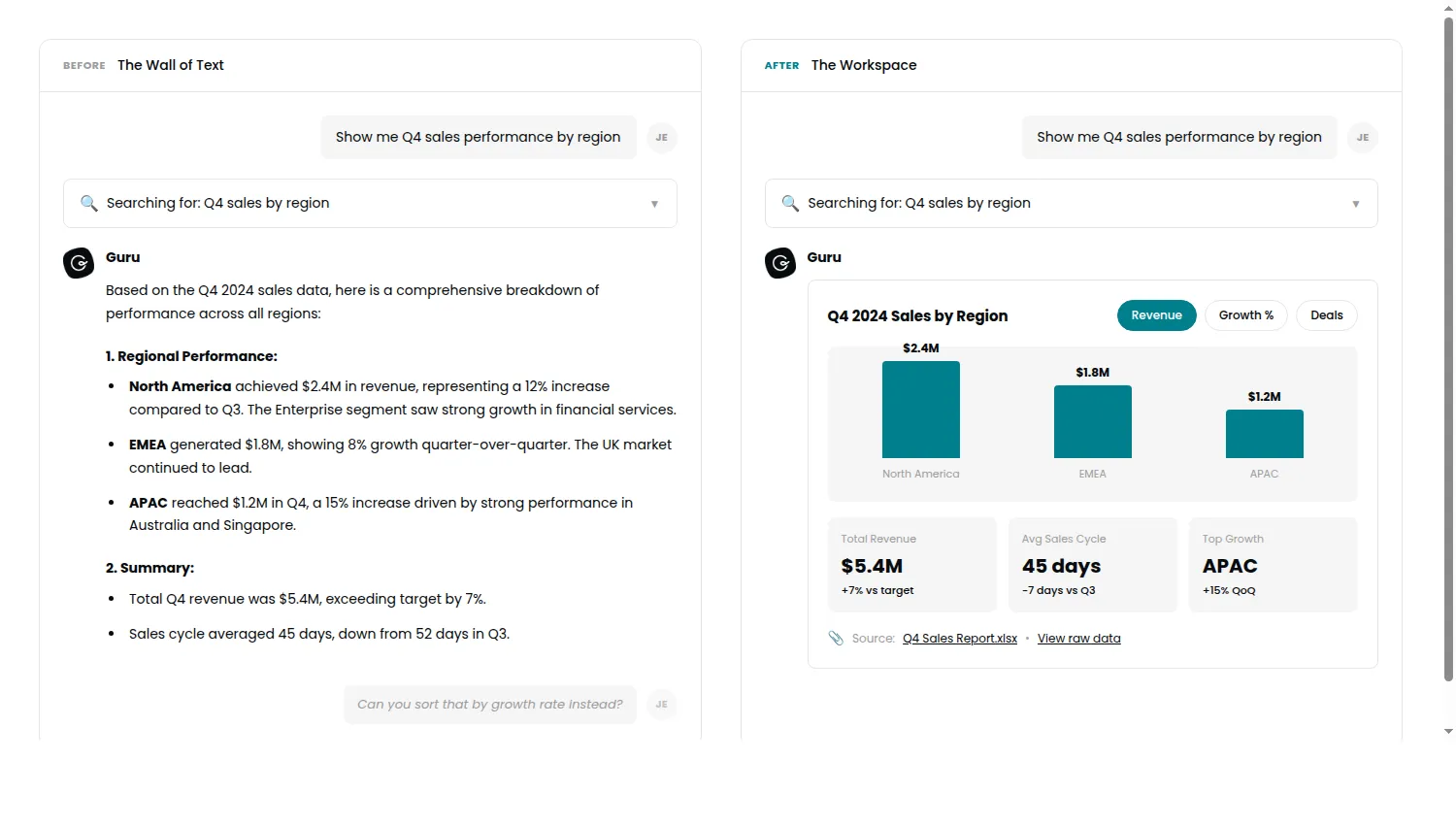

Citations at the bottom. Confidence caveats sprinkled throughout. “Based on the available information…” We’ve treated trust as a tone-of-voice problem, as if the right words in the right order would finally convince users to believe what the AI tells them.

It’s not working.

I know this because every Friday, I talk to a customer who uses our AI features. Not a summary from the research team. Not a dashboard. An actual conversation with someone trying to get work done. And I keep hearing variations of the same thing:

“I can see where the answer came from. I just have to wade through all of it.”

The wall of text isn’t a design limitation. It’s a trust barrier we built and then tried to paper over with better copywriting.

Trust requires visible proof

In my former life as a physician, I didn’t write patients a long explanation of why they were healthy. I walked them to the screen and showed them. The imaging. The lab values. The evidence. Trust isn’t built through reassuring words. It’s built through visible proof and connection.

I can see where the answer came from. I just have to wade through all of it.

There’s a reason we say “seeing is believing” and not “reading is believing.” Our brains process visual evidence differently than text. When you read a claim, you’re evaluating an argument. When you see the source, you’re verifying a fact. One asks for trust. The other earns it.

AI has been asking users to take its word for it. That’s not trust. That’s faith. And faith is a fragile foundation for enterprise software.

The follow-up prompt tax

Here’s what the wall of text actually costs you.

A user asks your AI a question. They get 800 words back. Their next message is almost always some version of “can you sort these by date?” or “show me just the ones from Q3.”

Harald Kirschner from VS Code calls this the “choose-your-own-adventure prompt” style. Users navigate by typing commands instead of clicking. Every follow-up is a tax. It’s friction. It’s a moment where the user is doing work the interface should have done for them. And worse, it’s a moment where trust erodes, because the user is now interrogating the AI rather than collaborating with it.

Three follow-up prompts to get to something useful isn’t a conversation. It’s an interrogation.

Something is shifting

New infrastructure is emerging that changes what’s possible. The Model Context Protocol (an open standard now backed by Anthropic, OpenAI, and the Linux Foundation) recently added support for interactive UI components within AI responses. Not just text. Dashboards. Forms. Filterable tables. Clickable sources that take you directly to the evidence.

This isn’t about making chatbots prettier. It’s about making AI responses explorable instead of just readable.

Imagine asking an AI to analyze a contract and instead of getting a summary you have to trust, you get the PDF with specific clauses highlighted. You can click to approve or flag sections. You can see exactly what the AI saw.

Or asking for sales data and instead of a text narrative, getting an interactive chart you can filter by region, by quarter, by rep. No follow-up prompt required.

The response becomes a place of connection, not a wall of text.

The response becomes a place of connection, not a wall of text.

As Kirschner puts it, these interfaces finally include “the missing human step” — when the workflow needs a decision, a selection, or exploration, the UI provides the right interaction at the right time. Text tells you what happened. Interaction lets you verify it yourself.

The myth of human-in-the-loop

We talk a lot about “human in the loop” as if it’s a checkbox we’ve already checked. User can see the output? Human in the loop. User can thumbs-up or thumbs-down? Human in the loop.

But that’s not a loop. That’s a dead end.

Real human-in-the-loop requires the human to have agency: the ability to manipulate, explore, verify, and course-correct. You can’t stay in the loop if the interface doesn’t give you anything to do.

Direct manipulation beats explanation. Every time.

What this means for design leaders

If you’re building AI features, here’s the question worth sitting with:

Are you designing for confidence, or for auditability?

Confidence is what the AI projects. Auditability is what the user can verify.

We’ve been optimizing for confidence. Making the AI sound sure, adding caveats to seem humble, crafting responses that feel authoritative. But confidence without auditability is just marketing.

The teams that get this right will stop asking “how do we make the AI sound trustworthy?” and start asking “how do we make the AI’s reasoning visible?”

The wall of text was never the answer. It was the constraint we accepted because we didn’t have better options.

Now we do.

The question is whether we’ll use them to actually solve the trust problem — or just build fancier walls.

Harald Kirschner quotes sourced from the MCP Apps announcement